Cognitive Predators

Abductive Mastery and the New Hierarchy of Human-AI Intelligence

Image Generated with Google Gemini

“It is a great mistake to suppose that abduction implies any certainty, or even any high probability, of its conclusion. Its only justification is that it is the only possible hope of regulating our future conduct rationally.”

— Charles Sanders Peirce, Collected Papers, 5.171

Imagine a seasoned strategist reviewing an AI-generated report on geopolitical risks: the analysis is fluent, polished, and statistically impeccable - yet it misses a subtle anomaly that could shift the entire frame of the crisis. A junior analyst accepts it as authoritative; the expert spots the “fluent mediocrity” and intervenes with a single creative pivot, extracting far deeper insight. That gap, between the analyst who accepts the output and the expert who sees through it, is what this article is about.

Artificial intelligence systems are not approximations of human cognition; they are powerful engines of pattern-based inference that operate without genuine understanding. The cognitive faculty they cannot replicate is abduction - the creative, hypothesis-forming leap that connects surprising facts to new explanatory frameworks, and this gap defines the decisive boundary between what machines can do and what expert practitioners alone can provide. This article argues that this creative reasoning capacity, grounded in deep domain expertise, hard-won intuition, and disciplined inquiry, is the apex cognitive skill of the AI era. Practitioners who master it will function as cognitive predators: professionals who extract intelligence of a qualitatively higher order from AI systems than their less expert peers can generate. To develop this argument, the article distinguishes the three modes of inference, identifies the structural limits of machine architecture, examines the role of expertise and hard-won intuition in enabling creative leaps, and proposes a three-tier cognitive hierarchy - cognitive predators, competent users, and naive consumers - whose separation is determined not by access to tools but by what practitioners bring to them.

The Misframed Debate

The arrival of capable artificial intelligence in professional settings has generated two symmetrical errors that share a common and consequential root. The first is the error of dismissal: AI systems are sophisticated autocomplete, incapable of producing outputs a trained professional should take seriously. The second is the error of displacement: AI will render human expertise obsolete by producing outputs that are indistinguishable from, or superior to, those of skilled practitioners. Both errors assume that what AI does and what humans do are the same kind of thing, that the difference is one of degree rather than kind. That assumption is wrong, and its wrongness has direct implications for how professionals should position themselves relative to these tools.

A large language model does not think, understand, or reason in any meaningful sense; it performs extraordinarily sophisticated pattern completion across enormous amounts of training text, producing outputs that cohere because coherence is common in that data.[1] Genuine cognition involves the active construction of meaning by an embedded subject, a sentient being with experience, intention, stake, and the capacity for genuine surprise, and AI systems have none of these properties. The practical implication is direct: AI systems will never independently generate the insight that changes the frame of a problem. They can explain, organize, and combine ideas, but only within the structure they are given, which means their output is only as strong as the frames and guidance their human partners provide.

This dynamic yields a three-tier cognitive hierarchy that frames the analysis throughout this article. At the apex are cognitive predators: experts with strong domain knowledge, hard-won intuition, and skill in creative abductive reasoning who use AI as a targeted synthesis tool. The middle tier consists of competent users: practitioners with enough subject knowledge and technical fluency to extract value from AI, but whose outputs remain in the realm of elaboration rather than genuine insight. At the base are naive consumers: users who treat AI outputs as answers rather than hypotheses to be tested, making them the most vulnerable to fluent mediocrity: polished results that lack real analysis. Understanding why this hierarchy exists, and why it will not collapse, requires a precise account of what machines actually do and what they cannot do by design.

What Machines Actually Do

Machine learning systems are, at bottom, pattern-matching engines: they learn structure from a training dataset and use those patterns to map inputs to outputs while minimizing prediction error.[2] A large language model works by predicting the next most likely piece of text based on the context it has already seen, and its writing seems coherent because coherence appears frequently in its training data. This design gives AI systems real and remarkable strengths: they can pull together information from massive volumes of text at speeds no human analyst can match, maintain a consistent thread across long documents, surface relevant precedents, and reorganize complex material into whatever structure a user requests. These capabilities constitute the synthesis engine’s genuine power - speed, breadth, and availability at effectively no cost.

What this design cannot do is create a truly new explanatory framework, because the model has no stake in the problem, no lived experience in the domain, and no access to real-world evidence that would make one explanation more convincing than another. An AI system prompted without a solid domain frame will default to fluent mediocrity: outputs that look plausible because they follow familiar patterns but remain analytically thin and difficult for non-experts to evaluate. This creates a real professional risk in AI-augmented environments: experts and novices do not benefit equally from the same tools, because experts alone can distinguish the appearance of insight from the real thing.

The machine also lacks any capacity to experience an anomaly as anomalous. An AI can detect that something deviates from a pattern, but it cannot feel that something is surprising, odd, or wrong; it processes every input through the same statistical machinery with no internal pressure to resolve a mismatch. This distinction between detecting a deviation and experiencing one is not merely a small technical gap but a foundational divide. Abduction starts exactly where pattern-matching ends: when a prepared human mind notices that something is off in the expected picture and feels driven to explain why.

The Logic of Abduction

Three fundamental modes of inference define the landscape of human reasoning, and understanding their differences is essential for grasping what AI can and cannot do. Deduction moves from general rules to specific conclusions: if all humans are mortal and Socrates is human, then Socrates is mortal, with the conclusion already contained in the premises. Induction moves in the opposite direction, building a probable rule from repeated observations: the sun has risen every day in recorded history, so it will likely rise tomorrow. Abduction, the third and most distinctive mode, begins with a surprising or puzzling fact and proposes the hypothesis that, if true, would best explain it.[3] Peirce’s formulation makes the logic clear: a surprising fact C is observed; if hypothesis A were true, C would follow naturally; therefore, there is reason to suspect that A may be true.[4]

Abduction does not prove its conclusion. It proposes a possible explanation worth investigating. Which is why Peirce saw it as the driving force behind all scientific discovery. Three features set this kind of inference apart from anything an AI system can do. First, it is genuinely generative: it introduces a framework or hypothesis that was not already present in the problem space. Second, it is grounded in context and lived experience: the creative leap is shaped by the reasoner’s accumulated sense of which explanations have worked in similar situations, which is why a novice confronting the same puzzling data may not even notice there is a puzzle to solve. Third, it is inherently evaluative: the practitioner does not merely generate a hypothesis but also judges its promise against a deep reservoir of domain knowledge, so that the idea and its assessment of value arise together.

These three features: generativity, contextual grounding, and built-in judgment, are exactly what current AI architectures cannot reproduce. When an AI system produces something that appears to be a new hypothesis, it is recombining statistically plausible patterns from its training data, not engaging in genuine reasoning. Because those recombinations can look like insight, especially to practitioners without deep domain expertise, they represent a real professional trap: fluent mediocrity mistaken for genuine analysis. A machine can elaborate within the frames it is given; it cannot choose the frames themselves. Understanding why that capacity for choosing frames belongs only to expert human practitioners requires a clear account of what expertise actually is.

How Expertise and Hard-Won Intuition Enable Abduction

The capacity for abductive reasoning depends on the depth and internal organization of a practitioner’s domain expertise, and it is not evenly distributed across practitioners. Research on expert performance shows that what separates elite practitioners from merely competent ones is not the sheer volume of information they hold but the structure of how they hold it: the richness of their pattern libraries, the speed with which they recognize meaningful cues, and the density of the connections linking concepts in their domain.[5] These structures allow experts to grasp the significance of a situation rapidly and without conscious step-by-step analysis, producing the confident orientation that looks like intuition but is in fact the outcome of thousands of hours of deliberate practice.

Gary Klein’s research on decision-making in real-world environments, such as firefighters, military commanders, and intensive care nurses, shows that experts under time pressure do not generate and compare multiple options.[6] Instead, they recognize the situation as a familiar type and mentally run the first workable course of action forward in their minds. This recognition-and-simulation cycle is the cognitive foundation from which abductive leaps arise when an expert encounters an anomaly, a pattern that does not match the familiar template. The richness of their domain knowledge creates internal pressure. The mismatch is not simply registered but felt as a problem demanding explanation, and the creative hypothesis emerges from the expert’s ability to search across a large, densely structured knowledge network for a framework that resolves it. A novice, lacking that network, may not experience the anomaly as anomalous at all, which means expertise shapes not only the answers that can be generated but the very questions that can be perceived.

Michael Polanyi’s concept of tacit knowledge: the knowledge experts carry that they cannot fully put into words, clarifies the dimension of expertise most relevant to this argument.[7] Polanyi’s core claim is that we know more than we can tell: the master chess player cannot fully explain the whole-situation read that signals a dangerous position; the experienced clinician cannot reduce diagnostic intuition to a checklist. This hard-won, inarticulable layer is not a limitation but the accumulated residue of thousands of hours of practice, shaped by feedback loops that refine pattern recognition below conscious awareness. It is precisely this dimension that AI systems cannot acquire from text, regardless of how much they read, because this kind of knowledge is formed through embodied experience in the world, not through written descriptions of that experience.

Kahneman’s dual-process framework offers a useful way to describe how this expertise operates in practice.[8] System 1 - fast, automatic, and pattern-driven, generates the initial sense that something deserves attention, that a situation fits a known type, or that a particular explanation feels right. System 2 - slow, deliberate, and rule-governed, tests, articulates, and communicates the insight that System 1 surfaced. In abductive reasoning, System 1 supplies the hypothesis and System 2 evaluates and expresses it. Modern AI systems produce text that resembles System 2 reasoning at remarkable speed and scale, but what they are actually doing is closer to System 0: probabilistic pattern generation without grounding, intention, or accountability.[9] The machine does not experience anomalies, feel cognitive tension, or grasp why a pattern matters; it can widen the space of possible hypotheses, but it cannot replace the expert mind from which genuine insight arises.

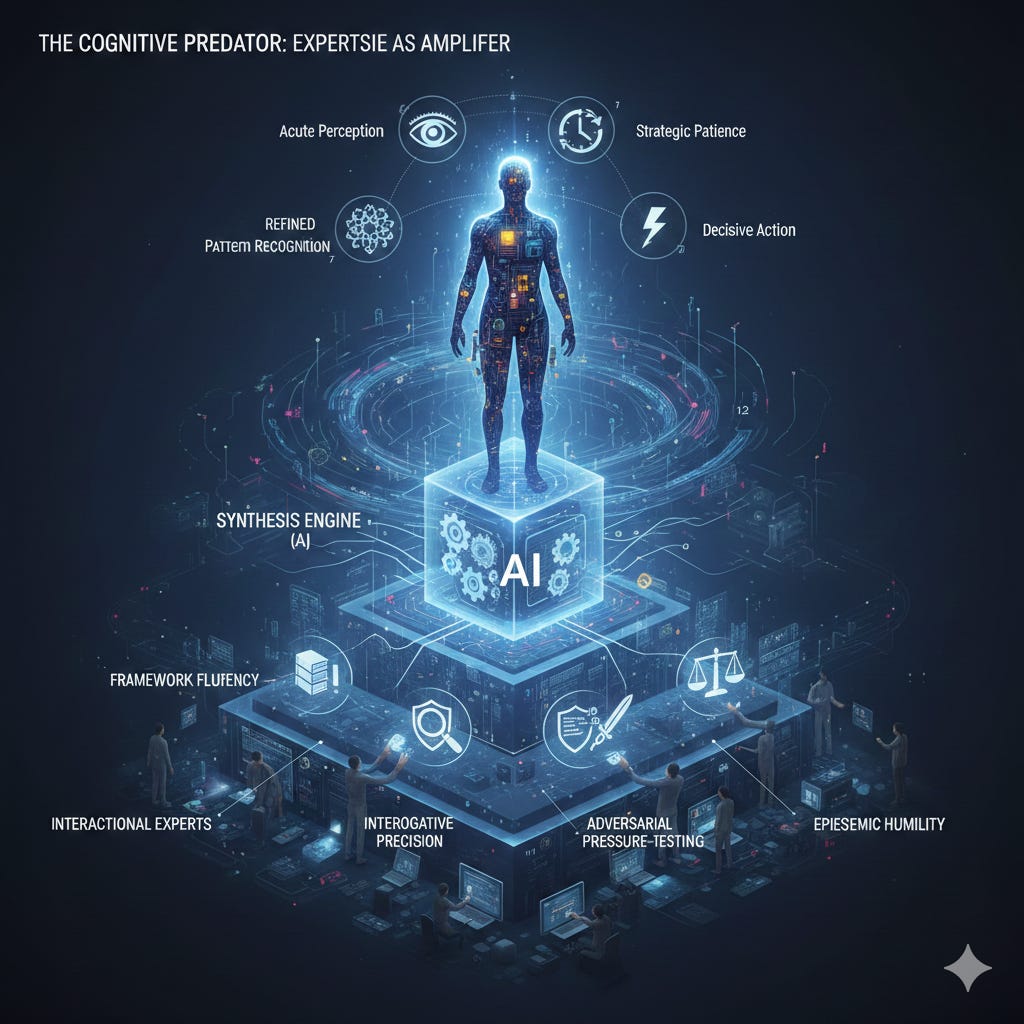

The Cognitive Predator: Expertise as Amplifier

In ecological systems, apex predators are defined not by a single trait but by the compounding interaction of multiple superior capabilities, acute perception, refined pattern recognition, strategic patience, and decisive action at the moment opportunity appears. The cognitive predator in professional knowledge work is defined in the same way.[10] Deep domain expertise, hard-won intuition, the capacity for creative abductive reasoning, and the self-awareness to separate genuine insight from fluent mediocrity are not isolated advantages but mutually reinforcing ones: strong expertise creates richer pattern libraries, which sharpen anomaly detection, which improve hypothesis generation, which enable more powerful direction of the synthesis engine. The ecological analogy captures the compounding nature of the advantage, which is why the cognitive predator’s superiority is categorical rather than incremental.

Collins and Evans’s taxonomy of expertise clarifies why depth of domain knowledge, not simple familiarity with AI tools, determines who occupies the apex position in AI-augmented professional work.[11] Their distinction between being able to talk intelligently about a field and being able to actually advance it maps directly onto the difference between competent and apex AI use. The practitioner who can talk the talk can craft reasonable prompts and judge AI outputs for surface plausibility. The practitioner who can walk the walk can introduce new conceptual frames, detect when the AI has produced sophisticated-sounding nonsense, and steer inquiry toward genuine insight. It is this deeper mastery that gives the cognitive predator an irreducible advantage, and it is an advantage that cannot be gained simply by becoming more proficient with the tools.[12]

A concrete illustration sharpens the point. A practitioner analyzing a strategic environment introduces specific theoretical lenses: Dolman’s positional theory of relative positional advantage from Pure Strategy; the classical Chinese concept of weijī (危機), where crisis signifies both danger and a pivotal moment for decisive action; and the judo principle of kuzushi, the deliberate unbalancing that precedes decisive engagement.[13] These are not keywords or search terms but complex, pre-structured analytical frameworks that reorganize the evidential field the moment they are applied. Once the practitioner supplies such a framework, the AI system can immediately reprocess everything it knows through those lenses, producing structured analysis, counter-arguments, and implications at a speed that would take an unaided human hours to generate.

But the choice of framework, the creative judgment that this is the right lens for this problem, does not come from the system. It comes from the practitioner’s accumulated sense of which frameworks have proven powerful in analogous situations, and the AI can elaborate a frame only after the expert selects it. The amplification effect is not incremental.[14] An expert does not produce outputs that are just slightly better than those of a less-skilled practitioner; they produce outputs categorically different in analytical depth, explanatory power, and practical reliability. A well-chosen frame enables the synthesis engine to generate analysis that is coherent, illuminating, and actionable; a poorly chosen frame, or the absence of any explicit frame, yields fluent mediocrity: analysis that looks plausible but is only as good as the limited framing the practitioner supplied.

The Art of Inquiry as Professional Discipline

Mastery of inquiry in the AI age is a professional discipline, not a technical skill, grounded in the same habits that have always set strong analysts and advisors apart: the ability to define the right problem before attempting to solve it, to notice when an existing framework no longer serves, to borrow concepts from other fields that reorganize the problem, and to remain honest about the difference between what one genuinely knows and what one merely assumes. Karl Weick’s work on how people make sense of complex organizations illuminates why this distinctly human capacity cannot be manufactured by a machine.[15] Making sense of a situation is not the passive intake of information but the active construction of a plausible explanation by someone embedded in a situation that matters to them and carries real consequences, a condition AI systems do not share because they have no lived context and no stake in outcomes. The four disciplines of inquiry described below are the practical expression of this irreducibly human contribution to any AI partnership.

Framework fluency is the cultivated ability to draw on a broad and deep repertoire of analytical lenses across disciplines, historical periods, and domains of practice. A practitioner limited to the frameworks of a single field is constrained to the creative possibilities those familiar lenses allow, so the synthesis engine can only ever elaborate variations on what the practitioner already knows. Reading widely is not an intellectual indulgence but a professional investment with compounding returns in the AI age, because every additional framework in the practitioner’s repertoire expands the space of hypotheses the synthesis engine can be directed to explore.[16]

Interrogative precision is the ability to pose questions at the right level of abstraction and in the right sequence to unlock the thinking a problem requires. A question built on the wrong frame will receive a fluent elaboration of that wrong frame, and no subsequent prompting will recover the ground lost by the initial misdirection. A practitioner with interrogative precision knows how to shift levels of analysis, from the specific to the structural, from the historical to the principled, from the descriptive to the causal, and can recognize when a given level has reached its explanatory limit.

Adversarial pressure-testing is the habit of treating AI-generated outputs not as conclusions but as first drafts that require rigorous critique. This means identifying the hidden assumptions inside an AI response, introducing evidence the system has not considered, and asking what a well-informed skeptic would say against the analysis as presented. A practitioner who applies this habit consistently produces work of a fundamentally different character than one who accepts the first plausible output, because pressure-testing is where the synthesis engine’s power is most effectively exploited and where its blind spots are most reliably caught.

Knowing the limits of your tools is a discipline that maintains a clear-eyed internal model of what AI systems can and cannot do.[17] A language model will generate confident-sounding analysis in domains where its training data is thin, biased, or outdated; it will produce narratives that hold together internally but are factually wrong; and it will elaborate a poorly chosen frame with the same fluency it applies to a well-chosen one. The practitioner who understands these failure modes can catch them before they shape decisions; the practitioner who does not is exposed in direct proportion to their confidence in the tool.

The Emerging Cognitive Hierarchy

The combined effect of the dynamics described in this article is the emergence of a new cognitive hierarchy in professional knowledge work, one that will not flatten as tools become more widely available.[18] The advantage of the apex practitioner is not a matter of having better tools but of what they bring to those tools, and as AI systems grow more capable, the quality of the framing that guides them becomes more consequential, not less. This is the structural reason the hierarchy deepens rather than collapses as AI capability increases: the more powerful the synthesis engine, the greater the difference in output between a well-chosen frame and a poorly chosen one.

Cognitive predators - practitioners with deep domain expertise, broad cross-disciplinary knowledge, hard-won intuition, and mastery of creative abductive reasoning - use AI as a highly capable synthesis engine, guiding it with well-chosen frameworks and examining its outputs through disciplined pressure-testing. Their work is qualitatively different from anything they could produce without AI and qualitatively different from what less expert practitioners produce with the same tools. Competent users generate work that is better than what they could produce unaided, but their analysis remains within the space of elaboration: structured, competent, and analytically conventional, because they are organizing information within inherited frameworks rather than generating new ones. Naive consumers face the central irony of the AI age: the tools most accessible to non-experts are also the most dangerous in high-stakes analytical settings, precisely because the output’s fluency can conceal its limitations, and the non-expert often lacks the domain knowledge to recognize fluent mediocrity for what it is.[19]

Conclusion

The arrival of AI as a professional tool does not diminish the value of human cognition; it concentrates it. The operations AI now performs well, building generalizations from data, elaborating frameworks, rapid synthesis, and articulation, are available at near-zero cost and near-instant speed, which means they no longer define professional advantage. The operation AI cannot perform, the creative act of generating a promising new explanatory hypothesis grounded in expert experience, has not become cheaper. It has become scarcer relative to the explosive growth in everything else, and scarcity raises its value precisely because supply has not increased.

The professionals who will define the upper tier of knowledge work understand this and are positioning themselves accordingly. They are building broad and deep expertise not in spite of AI but because of it, since depth is now the rate-limiting factor in the quality of intelligence that can be extracted from the most powerful synthesis tools ever created. They are cultivating the art of inquiry as a professional discipline: expanding their repertoire of analytical frameworks, refining the precision of their interrogations, practicing adversarial pressure-testing, and maintaining an honest, clear-eyed awareness of the limits of their tools. They treat AI as an instrument to be directed, not an oracle to be believed.

The age of AI does not diminish the value of human intellect; it diminishes the value of a certain kind of intellect prized for encyclopedic recall and retrieval. What begins now is the age of the question. The right question, at the right level of abstraction, applied to the right evidential field, through the right conceptual lens, by the right expert at the right moment, is the decisive unit of professional value. It has always been rare, and it is the only kind of thinking that cannot be automated. These are the cognitive predators of the emerging knowledge economy: they will not be replaced by AI because they do what AI cannot, and they will not be matched by less expert practitioners using the same tools because the quality of the synthesis engine’s output is only as good as the quality of the framing the practitioner provides.

The views expressed here are my own and do not reflect the official policy or position of the Department of the Army, the National Guard, the Department of Defense, or the U.S. Government.

Notes

[1] Tom Mitchell, Machine Learning (New York: McGraw-Hill, 1997), 2. Mitchell’s canonical definition formalizes machine learning as optimization over a performance measure defined relative to a training distribution, with no mechanism by which a system could generate explanatory frameworks that transcend that distribution. This architectural constraint is not a temporary limitation to be overcome by scale but a structural feature of the learning paradigm itself. Large language models are extraordinarily powerful instantiations of this paradigm, but they remain function approximators whose outputs are, in a precise mathematical sense, interpolations within the space of patterns their training data defined. The definition contains no account of how a system might introduce a genuinely novel explanatory framework, which is the precise capacity this article argues is irreplaceable.

[2] Mitchell, Machine Learning, 2.

[3] Charles Sanders Peirce, Collected Papers of Charles Sanders Peirce, vols. 1–6, ed. Charles Hartshorne and Paul Weiss (Cambridge: Harvard University Press, 1931–1935), 5.171–5.173. Peirce used the terms “abduction” and “retroduction” interchangeably across his writings, settling on “abduction” in his later work. His canonical syllogistic formulation—the surprising fact C is observed; but if A were true C would be a matter of course; therefore there is reason to suspect A—makes explicit that the conclusion is never established, only proposed as a candidate worthy of further investigation. The critical point is that abduction is the only one of the three inference modes that introduces genuinely new content into the reasoning process; deduction and induction operate entirely on propositions already available to the reasoner. Peirce regarded abduction as the engine of all scientific discovery, constrained but never replaced by the subsequent inductive testing of hypotheses.

[4] Igor Douven, “Abduction,” in The Stanford Encyclopedia of Philosophy, ed. Edward N. Zalta, Fall 2021 Edition, https://plato.stanford.edu/archives/fall2021/entries/abduction/. Douven provides the most comprehensive contemporary treatment of abduction’s philosophical lineage, tracing its development from Peirce through twentieth-century philosophy of science. The phrase “inference to the best explanation” was introduced by Gilbert Harman in “The Inference to the Best Explanation,” Philosophical Review 74, no. 1 (1965): 88–95, and has since become the dominant label in analytic epistemology. Douven’s treatment is particularly useful for its careful distinction between abduction and Bayesian inference, demonstrating that the two are not equivalent even when they converge on the same conclusion. The key difference is that abduction is a rule for generating candidate hypotheses, whereas Bayesian inference is a rule for updating probabilities among hypotheses already in view.

[5] K. Anders Ericsson, Ralf Th. Krampe, and Clemens Tesch-Römer, “The Role of Deliberate Practice in the Acquisition of Expert Performance,” Psychological Review 100, no. 3 (1993): 363–406. Ericsson and colleagues established across multiple domains—chess, music, athletics, medicine—that the cognitive architecture distinguishing elite practitioners from competent ones is not primarily a function of innate ability but of accumulated deliberate practice structured around immediate feedback. The mechanism of interest is the progressive refinement of internal representations: experts develop richer, more densely interconnected mental models that allow them to chunk complex patterns as single cognitive units. This chunking is what enables the rapid recognition of meaningful structure in novel situations that appears to observers as intuition. The implication for the present argument is that the abductive substrate—the pattern library from which anomaly-recognition and hypothesis-generation emerge—is built through years of domain-embedded experience that no amount of text processing can replicate.

[6] Gary Klein, Sources of Power: How People Make Decisions (Cambridge: MIT Press, 1998), 31–44. Klein’s Recognition-Primed Decision model emerged from field studies of naturalistic decision-making in high-stakes environments including firefighting, military command, and intensive care nursing. His central finding contradicts classical decision theory: experts under time pressure do not enumerate and compare multiple options but instead recognize a situation as a familiar type and mentally simulate the first viable course of action. The simulation step is critical—it is not passive recognition but active forward projection that tests whether the recognized pattern actually fits the current situation. Klein’s model maps directly onto the abductive structure described in this article: situation recognition corresponds to the anomaly-detection that triggers abductive inquiry, and the simulation-evaluation loop corresponds to the simultaneous generation and assessment of the explanatory hypothesis.

[7] Michael Polanyi, The Tacit Dimension (Garden City: Doubleday, 1966), 4. Polanyi’s foundational claim—”we can know more than we can tell”—identifies the tacit dimension as the component of expert knowledge that resists both articulation and formalization. His examples range from the recognition of faces (we cannot specify the features that make a face familiar) to the diagnosis of disease (the experienced clinician’s intuition cannot be fully reduced to an explicit decision tree). The tacit dimension is not a gap to be filled by more articulate description; it is constitutively dependent on embodied, practice-embedded engagement with the world. This is the feature of human expertise that makes it irreproducible by systems trained on text, however vast the corpus: text is propositional representation, and the tacit dimension is precisely what propositions cannot capture.

[8] Daniel Kahneman, Thinking, Fast and Slow (New York: Farrar, Straus and Giroux, 2011), 11–13. Kahneman’s System 1 / System 2 framework synthesizes several decades of dual-process research in cognitive psychology into a unified descriptive model. System 1 is fast, automatic, and associative—it runs continuously and generates intuitive responses, emotional reactions, and pattern recognitions without deliberate effort. System 2 is slow, effortful, and rule-governed—it monitors System 1 outputs, applies explicit reasoning, and endorses or overrides System 1’s proposals. The key point for the present argument is that System 1 is the locus of tacit expertise: the master practitioner’s rapid recognition of meaningful patterns is a System 1 capability built through years of deliberate practice, and AI systems have no genuine analog of System 1 because they lack the grounded experience from which System 1 competence is built.

[9] Chiriatti M, Bergamaschi Ganapini M, Panai E, Wiederhold BK, Riva G. System 0: Transforming Artificial Intelligence into a Cognitive Extension. Cyberpsychol Behav Soc Netw. 2025 Jul;28(7):534-542. doi: 10.1089/cyber.2025.0201. Epub 2025 Jun 12. PMID: 40504761; “System 0: Is artificial intelligence creating a new way of thinking, an external thought process outside of our minds?”, Catholic University of the Sacred Heart, 22 October 2024, https://techxplore.com/news/2024-10-artificial-intelligence-external-thought-minds.html.

[10] Ethan Mollick, Co-Intelligence: Living and Working with AI (New York: Portfolio/Penguin, 2024), 47–62. Mollick’s field observations of AI-augmented professional work across multiple industries consistently identify the same rate-limiting factor: not model capability but the prompter’s ability to frame problems and apply appropriate mental models. His observations are consistent with the amplification dynamic described here—that the synthesis engine’s output quality is bounded by the quality of the framing supplied. Mollick’s account is particularly valuable because it is empirically grounded in actual professional practice rather than theoretical projection, and because it resists both the dismissal and displacement errors identified at the outset of this article. His conclusion—that the most effective AI users are those who bring genuine domain expertise to the interaction—directly supports the cognitive predator thesis.

[11] Harry Collins and Robert Evans, Rethinking Expertise (Chicago: University of Chicago Press, 2007), 14–35. Collins and Evans develop a taxonomy of expertise that distinguishes, among other categories, “interactional expertise”—sufficient mastery of a domain’s discourse to engage credibly with its practitioners—from “contributory expertise”—sufficient mastery to extend, challenge, and redirect the domain itself. The distinction matters for the present argument because interactional expertise is precisely what AI systems can now simulate at low cost: they can engage with a domain’s vocabulary, reproduce its conventions, and produce outputs that pass surface scrutiny. Contributory expertise, by contrast, requires the accumulated tacit knowledge and genuine domain immersion that AI systems cannot acquire. The cognitive predator operates at the level of contributory expertise; the competent user operates at the level of interactional expertise; and the gap between them is not a function of tool access.

[12] Everett C. Dolman, Pure Strategy: Power and Principle in the Space and Information Age (London: Frank Cass, 2005).

[13] Everett Dolman, Pure Strategy: Power and Principle in the Space and Information Age, 1st edition (London; New York: Routledge, 2005), 6, 11. Dolman describes strategy as “a plan for attaining continuing advantage” because “the strategist can never finish the business of strategy, and understands that there is no permanence in victory – or defeat.” Weijī (危機) is the classical Chinese compound conventionally rendered in English as “crisis”; it is composed of the character for danger (wēi, 危) and the character jī (機), which more precisely denotes a pivotal moment or hinge point requiring decisive action—the common gloss “opportunity” overstates the optimistic valence of the original. Kuzushi (崩し) is a foundational concept in judo denoting the deliberate unbalancing of an opponent to create conditions in which a decisive technique can be applied; as a strategic analogy it refers to operations designed to create structural instability in an adversary’s system before delivering a decisive action. The value of these frameworks in the present context is their non-obvious applicability: their selection requires the kind of cross-domain pattern recognition that characterizes abductive expertise.

[14] David Perkins, King Arthur’s Round Table: How Collaborative Conversations Create Smart Organizations (Hoboken: Wiley, 2003), 11–28. Perkins develops the concept of “knowledge assets” that organizations and individuals bring to collaborative problem-solving, arguing that the quality of collective intelligence is non-linearly dependent on the quality of the conceptual frameworks participants supply. His argument supports the non-linearity claim made here: the synthesis engine does not produce outputs that are proportionally better when given better frames, but categorically better—because a well-chosen frame opens up entirely different regions of the analytic space. The practical implication is that the expert’s contribution to AI-augmented analysis is not additive but multiplicative.

[15] Karl Weick, Sensemaking in Organizations (Thousand Oaks: Sage Publications, 1995), 4–11. Weick argues that organizational actors do not passively receive and process information but actively construct plausible accounts of their situations—accounts that are simultaneously shaped by, and in turn shape, the ongoing flow of events. Sensemaking is retrospective (we can only make sense of what we have already experienced), enacted (our actions partly constitute the situations we are trying to understand), and social (our frameworks are inherited from and validated within communities of practice). These features make sensemaking irreducibly dependent on an embedded, situated subject—properties that AI systems, which have no ongoing situation, no stake in outcomes, and no membership in lived communities of practice, do not possess. The practitioner who brings genuine sensemaking capacity to the AI partnership is contributing something that cannot be generated by the system itself, however capable.

[16] “If you haven’t read hundreds of books, you are functionally illiterate, and you will be incompetent, because your personal experiences alone aren’t broad enough to sustain you.” Jim Mattis, Call Sign Chaos: Learning to Lead

[17] Nassim Nicholas Taleb, Antifragile: Things That Gain from Disorder (New York: Random House, 2012), 225–242. The concept most directly relevant here is Taleb’s via negativa: the principle that robustness is often better achieved by removing sources of fragility than by adding protective measures, and that knowing what to avoid is frequently more valuable than knowing what to pursue. Applied to AI-augmented professional work, the via negativa suggests that the practitioner’s awareness of what AI systems cannot do—the specific failure modes of confident-sounding but analytically groundless output—is at least as valuable as their awareness of what AI can do well. Knowing the limits of your tools is not a passive virtue but an active discipline: the practitioner who has mapped the AI’s failure modes can exploit its strengths while systematically guarding against its weaknesses.

[18] Tomás Chamorro-Premuzic, I, Human: AI, Automation, and the Quest to Reclaim What Makes Us Unique (Boston: Harvard Business Review Press, 2023), 88–107. Chamorro-Premuzic argues that the cognitive capacities most resistant to AI displacement are precisely those that are most difficult to measure and most dependent on accumulated human experience: judgment, creativity, and the ability to navigate genuinely novel situations. His analysis converges with the present argument from a different direction—empirical organizational psychology rather than philosophy of inference—and reaches a compatible conclusion: the professionals who will thrive in AI-augmented environments are those who invest in deepening distinctively human capabilities rather than competing with AI on tasks AI performs well.

[19] Bill Ault, “AI Enabled Dunning-Kruger Journey: Teleporting to Mount Stupid.”, Substack, 11 Feb 2026, https://open.substack.com/pub/billault/p/ai-enabled-dunning-kruger-journey?utm_campaign=post-expanded-share&utm_medium=web.